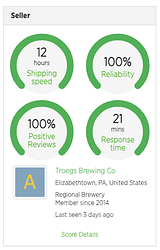

Here’s an overdue update: About a month ago we made the changes proposed in my previous post and it seems to be working quite well. Now, the seller’s most recent performance metrics are prominently displayed on each listing. Here’s an example of a seller with a perfect score:

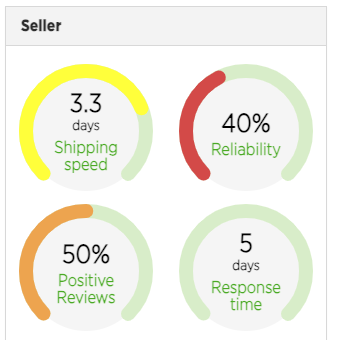

And here’s an example of a seller from whom I wouldn’t expect a great experience:

Buyers can quickly scan the metrics that are most important to them on any given listing, and the color of each seller performance metric draws attention to any problem areas. As proposed, each metric scores only the most recent 5 events to indicate recent performance. Here’s how each metric is calculated:

- Reviews = % of 5 most recent ratings the seller received that were positive

- Reliability = % of the last 5 sales that the seller canceled

- Shipping Speed = 100% if the carrier pickup scan is < 24hrs (excluding shipping holidays & weekends) since the Hops Sold email is sent to seller. Subtract 11% for each additional business day.

- Response Time = 100% - (1 / 7200 x (time of seller’s 1st response to a buyer-initiated message thread - time of buyer’s 1st message)). This applies to both orders & listings. Holidays & weekends are excluded. For additional nuances of Response Time, see here.

Also, scores are now company-wide, rather than per individual employee account. And don’t forget, you can always sort or filter listings by shipping speed or overall profile score. Buyers can expect a great experience from the vast majority of sellers on LEx, but profile scores now do a better job of communicating a seller’s recent track record and warning buyers of problems before they make a purchase. Thank you to the numerous brewers who provided feedback related to improving profile scores. These changes were heavily influenced by the results of our most recent survey and the numerous follow-up conversations that I had with respondents.